The EU AI Act is a risk-based regulation, not a technology ban. Contrary to common belief, it is already in force. Two deadlines have passed, and the main enforcement date is four months away. It applies to any company that develops or deploys AI affecting people in the EU, regardless of where the company is headquartered. Risk is determined by application, not by the technology itself. The main enforcement deadline is 2 August 2026, and most companies have yet to act due to lack of clarity.[1]

What it is and why you should care

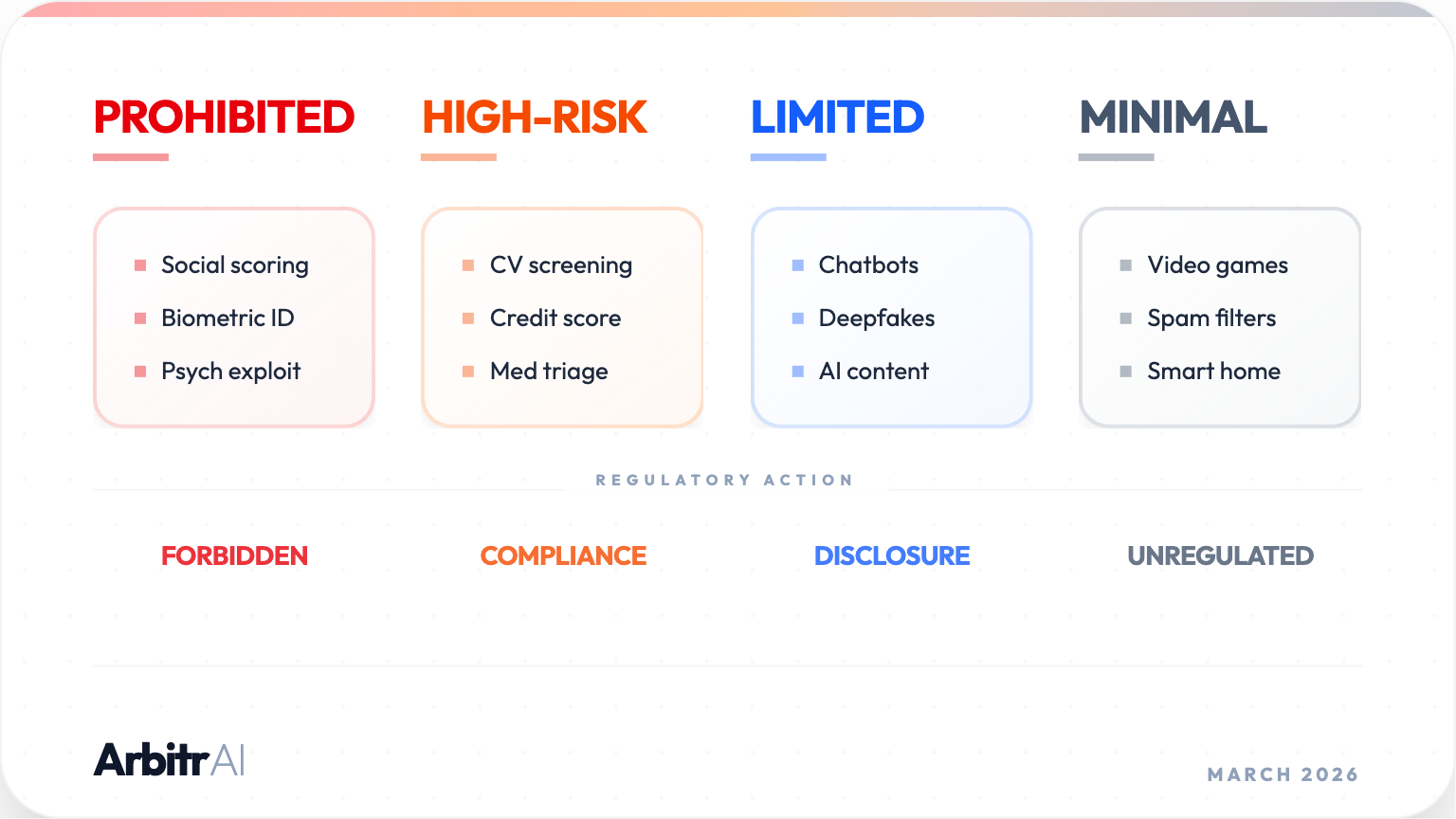

Rest assured: not all applications of AI are treated with equal scrutiny. The Act creates a four-tier risk ladder: prohibited, high-risk, limited-risk, and minimal-risk.[1] Most obligations fall on those that deploy the AI system - in a professional context - within EU reach. The Act also applies to internal usage, not just customer-facing products; something that catches most companies off-guard.

Non-compliance fines can reach up to EUR 35 million or 7% of global annual turnover for prohibited practices, and up to EUR 15 million or 3% for other violations. Fines can also apply when companies provide misleading or incomplete information to regulators. These are maximum amounts, however, and the Act explicitly requires economic viability of the company to be considered.[2]

The Phased Rollout Timeline

The European Commission AI Act Service Desk provides a clear implementation timeline.[3]

EU AI Act Interactive Timeline

2 Feb 2025

Prohibited AI practices are banned. AI literacy obligations are in force.

2 Aug 2025

GPAI obligations apply. National authorities are designated and penalties are enforceable.

2 Aug 2026

Main enforcement date. High-risk and transparency obligations enter into force, with full national and EU-level enforcement for most AI products now in production.

2 Aug 2027

Final phase. AI embedded in regulated products (medical devices, machinery, safety components) comes fully into scope. Last grace period closes for GPAI models placed on the market before August 2025.

Risk Classification

This is where most misunderstanding stems from.

The Act classifies systems based on what they do and in what context, not on the underlying technology. A system is high-risk if it falls in one of eight domains: biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and justice.[4]

The OCR example makes this concrete. Extracting line items from an invoice is not high-risk. The same extraction technology used to screen CVs and filter job applicants is high-risk. This explicitly listed under employment and worker management in Annex III.[4]

Two companies running identical underlying technology can land in completely different compliance categories depending on what their product does with the output.

The underlying technology does introduce one nuance. Modern document automation increasingly uses LLMs rather than classical OCR pipelines, and the Act treats these differently. LLM providers carry heavy provider-level obligations under the Act, including technical documentation, copyright compliance, and training-data transparency. If you call one of their models via API, those obligations stay with them because you are a downstream deployer. Only if you significantly retrain or fine-tune a foundation model to the point of fundamentally altering its capabilities could you inherit provider-level obligations, which is a high bar for most teams.[5]

What 'due diligence' requires

The Act does not demand perfection. It demands that you can demonstrate its concerns were taken seriously when requested.

Audit trails. Which model, which version, which prompt, on what input, producing what output. AI systems must be designed for record-keeping. Event logs should be automated to identify risks and capture substantial modifications throughout the lifecycle.[6]

Model and prompt versioning. Substantial or unforeseen changes in model version, provider, or system context (prompt, tools, access rights) can become legal triggers. Document what was in production and when.[7]

Data governance. GDPR remains central; the Act sits on top of it. Anonymize personal data at ingestion where full identity data is unnecessary. If you call model APIs, verify contractually that providers cannot train on your data.[8]

Human oversight. AI systems should be designed to allow meaningful human oversight. In practice, a non-technical decision-maker should be able to review, understand, and challenge what the system is doing.[9]

Concrete steps

- Map your AI surface area - list every AI-powered feature, then classify by application context against Annex III to gauge your exposure.[4]

- Version everything - model name, version, system prompt, deployment date. Log changes with timestamps.

- Tighten data handling - anonymize at ingestion and verify model-provider data-use policies in writing.

- Build audit-ready data flows - every internal and external data touchpoint should have a traceable record of what happened to it.

Compliance does not need to be a separate workstream or a time sink for your developers. We built ArbitrAI with this in mind: audit trails, model versioning, and data flow documentation are not addons; they are part of how the platform runs.